AI and machine learning would soon help you in speaking out your thoughts. Communication technology has advanced in leaps and bounds to help the speech impaired to speak their minds through a number of type-to-text applications. AI-based mind reading applications would make things easier sparing the person from typing words and numbers.

Many surprising causes can rob the power of speech like anatomical excision of tongue, spinal cord injury, motor neuron disorder, cerebral palsy, apraxia etc. More than 2 million people in the US need digital Adaptive Alternative Communication (AAC) methods as a support for their speech deficit.

A study released in 2008 states that about 1% of the British population require AAC support. The time is high to maximize the benefits of mind-reading tech not only in commercial applications but also in day to day activities of common man.

Advancement in communication technology as instruction interpreters:

After the invention of the first text-to-machine device in 1969, it was in 1986 when advancement of AAC technology was evident with Stephen Hawking’s use of a speech device known as the Equalizer.

He could press switches to select words in the desktop and later on a small computer attached to his wheel chair. But the technology missed one vital factor – retaining own voice. Hawking’s new voice sounded robotic with an American accent to which he gradually adjusted.

This is not only the case with Hawking’s. Millions using AAC supported communicators accepted the generic voices that sounded similar irrespective of age, sex and ethnicity. The voice of a little girl sounded horrifyingly similar to that of a 60-year-old.

By 2014, in a new development in communication technology customized digital voices could be created that helped in setting up an online platform Human Voicebank by VocaLiD. People irrespective of age and sex are allowed to donate their voices which are stored huge database which can be matched with the age of the user needing AAC support.

Artificial intelligence in bridging the gap:

Interpretation of brain waves has been possible with mind reading AI and machine learning enabling immobilized people in speaking out their minds. Software have been developed that can read and interpret brain waves and match them with suitable words or pictures. Advancement of communication technology has also made real-time speech-to-text conversion possible where silent instructions can be responded through special aural output.

Mind reading AI decoding hearing and speaking:

A mind-reading device invented by the scientists at the University of California not only converts mental thoughts into text with more than 90% accuracy but also can pick up what the person is hearing.

Electrodes implanted in the brain for treating epilepsy were also used to monitor the waves recorded in auditory cortex. Scientists used algorithms to decode the recorded speech sounds heard by the person. This is the pioneering step in creating an externally-worn device where AI can transcribe “perceived” and “produced” thoughts to speech.

Brain- computer interface -Translating thoughts into speech for paralyzed patients:

The brain-computer interface has generated sensation in communication technology enabling paralyzed patients to communicate better. It not only helps in speaking your thoughts but also interprets what you hear.

The electrical impulses picked up by the electrodes implanted in the brain transmit the signals wirelessly to a computer that decodes them into speech or text. If such a system can decode the simple commands of patients like yes, no or expressing hunger, pain and thirst, it would go a long way in helping them overcome the communication barrier and improve treatment outcomes.

Mind-reading device linked to smartphone apps:

In another striking advancement in mind-reading AI, Japanese scientists have invented an easily-operated device that can be connected to smartphone apps. An EEG graph was recorded from a normal person’s speech which was matched with syllables and numbers through machine learning.

This newly developed technology can work with 90% accuracy in recognizing numbers from 0-9 using brain waves recorded in EEG. The application is likely to be in use in the coming few years.

AlterEgo – The device that hears your internal voice:

Image Source : insidescandinavianbusiness.com

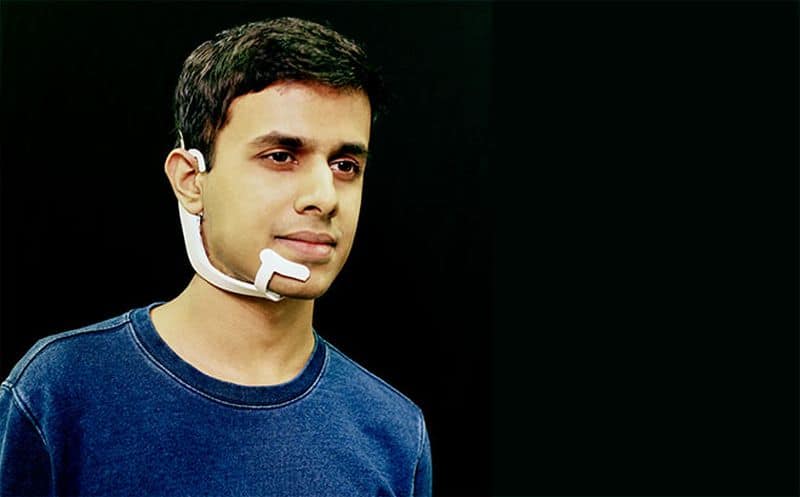

You have the most surprising device, a headset that can hear your internal voice and respond to your queries without literally making you speak. The aural output is provided through bone conduction. Whatever the user says internally, AlterEgo transcribes those words via electrodes attached to the skin.

The user can send commands to a virtual assistant and the response would be audible only to him. For example, if the user sends the mind command, “what is the time?” The virtual assistant will respond which will be audible to him only. This unique aspect enables real-time spoken and audible interactions that can be used in a range of situations.

The device described as an Intelligence-Augmentation device takes communication technology quite a few steps ahead where a person can use this device anywhere and get the answers to his queries without moving his lips.

How it works

The white-colored device worn externally covers one side of the jaw ending beneath the lips and is hooked at the upper end of the ear. Four electrodes fixed under the device remain in contact with the skin and pick up neuromuscular signals when a person silently thinks of a command.

If a person thinks of a command, the device’s artificial intelligence matches those signals with specific words and feeds them into a computer. The respond sent by the computer plays through the bone conduction speaker enabling the user to listen to the answer clearly. There is no need for a separate earphone.

Our cellphones have become an integral part of our life. If we need to find out something relevant, we have to take out our phone, open a specific app and type a specific search word. Amidst a conversation, this is a serious disruption that entirely shifts our attention from that situation.

It is highly accurate

AlterEgo can effectively respond with 92% accuracy as observed in a trial with 10 persons requiring 15 minutes of customization time. Though it is several notches below the 95% accuracy of Google’s voice transcription service but the researchers are optimistic in enhancing its accuracy over time.

The team is currently working on to enhance AlterEgo’s range of detecting words. The ultimate aim is to make internal vocalization more personal and intimate which is absent in Alexa or Siri. Its application would not be restricted to medical care but extended for common use in noisy environments.

Mind-reading AI is set to create a new dimension in communication technology as researchers come up with more amazing easy-to-use devices to enable the simplest way of communication for common use.